Have you ever invested heavily in an AI initiative, only to watch it stall or fall short of expectations?

While building AI solutions is challenging in its own right, selecting an AI development partner often becomes the deciding factor between success and failure.

In fact, industry research suggests that nearly 80% of AI projects fail to deliver measurable business value, not because of weak ideas, but because of poor AI vendor assessment and misaligned execution capabilities.

This is where a structured AI vendor evaluation checklist becomes essential. Rather than relying on assumptions or surface-level claims, this guide helps teams evaluate AI development companies with clarity, consistency, and measurable criteria.

In this blog, you’ll learn how to:

- Assess AI vendor selection criteria across technical, architectural, and operational dimensions

- Perform effective AI vendor due diligence using a structured scorecard

- Compare AI vendors objectively and choose an AI implementation partner aligned with long-term business goals

Let’s get into it.

How Can You Evaluate an AI Vendor’s Technical Capabilities?

Evaluating an AI vendor involves more than checking technical claims. What truly matters is the vendor’s ability to deliver AI systems that work reliably in real business environments.

A structured evaluation of AI solution providers helps teams move past surface-level promises and focus on practical delivery capabilities.

Table of Contents

This stage of AI development partner selection sets the foundation for every decision that follows. When technical readiness is assessed early, teams reduce delays, control costs, and avoid avoidable rework later in the project lifecycle.

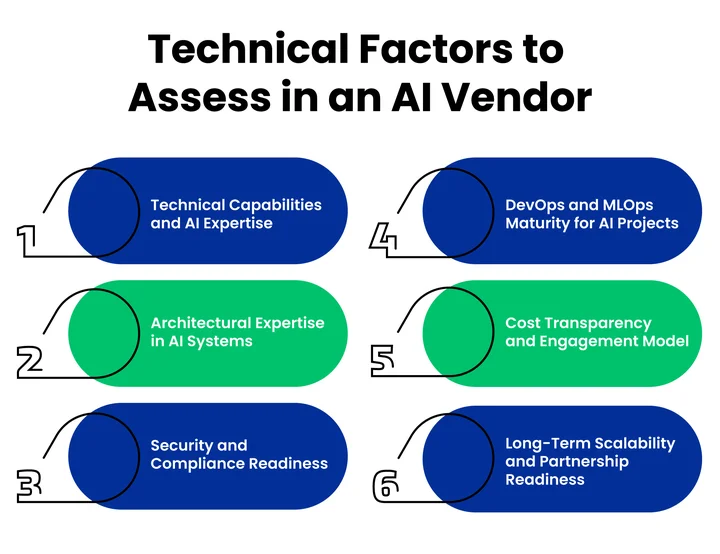

1. Technical Capabilities and AI Expertise

Technical capability serves as the starting point for any meaningful AI vendor assessment. Many vendors can build models, but fewer can deploy them successfully at scale. This evaluation step focuses on execution rather than experimentation.

Start by reviewing the vendor’s experience with AI model development and implementation. Real-world deployments reveal how well the vendor handles challenges such as data quality issues, performance optimization, and system reliability. These factors matter far more than theoretical expertise.

Industry familiarity also plays a role in evaluating AI Consulting solution providers. Vendors with domain experience understand sector-specific constraints, compliance requirements, and data behavior. This insight often leads to faster development cycles and fewer surprises.

Finally, assess how results are validated. Reliable vendors measure success through model accuracy, stability, and business impact. Clear performance metrics signal that the vendor delivers outcomes, not just code.

2. Architectural Expertise in AI Systems

Once technical debt is confirmed, attention should shift to system design. Even strong AI models struggle when the underlying architecture cannot support integration or growth. Architecture acts as the bridge between AI capabilities and business operations.

An effective AI technology partner evaluation includes reviewing how vendors design modular, scalable AI systems. Architecture should support performance, flexibility, and long-term adaptability.

Data pipelines, deployment workflows, and system monitoring practices offer strong indicators of architectural maturity.

For teams managing complex AI deployments, using Cloud Engineering expertise can ensure infrastructure scalability, integration, and system performance are maintained.

Integration capability matters just as much. Vendors should demonstrate experience connecting AI solutions with existing enterprise systems, APIs, and cloud platforms. Smooth integration reduces operational disruption and accelerates adoption across teams.

3. Security and Compliance Readiness

As AI systems rely heavily on sensitive data, security and compliance cannot remain secondary considerations. Strong safeguards protect both the organization and the AI solution’s long-term viability.

Evaluation should begin with data protection practices. Secure storage, controlled access, and encrypted data flows reflect operational discipline. These measures reduce exposure during training, deployment, and ongoing usage.

Regulatory awareness also plays a role in AI vendor due diligence. Vendors should demonstrate a working understanding of relevant regulations such as GDPR, HIPAA, or industry-specific standards. Governance practices such as auditing, accountability, and model oversight further indicate maturity.

Security readiness builds trust and reduces risk. Vendors that treat compliance as an ongoing responsibility offer stronger long-term reliability.

4. DevOps and MLOps Maturity for AI Projects

AI projects often face challenges after deployment. Operational maturity determines whether models continue to perform as expected over time. This makes DevOps and MLOps practices essential evaluation criteria.

Reliable vendors use automated workflows for model training, testing, and deployment. Automation improves consistency and reduces human error. Ongoing monitoring helps detect performance issues, data drift, and system anomalies early.

Lifecycle management also deserves close attention. Vendors should show how models are updated, retrained, and maintained as business requirements change. Historical performance data, such as uptime, response times, and system stability, offers useful insight into operational reliability.

Strong DevOps maturity signals that the vendor can support AI solutions beyond initial launch.

5. Cost Transparency and Engagement Model

Technical capability alone does not guarantee project success. Pricing clarity and collaboration structure shape how smoothly an AI initiative moves forward. Clear financial models help teams plan accurately and maintain trust throughout the engagement.

Cost evaluation should focus on transparency and predictability. Vendors may offer fixed, subscription-based, or usage-based pricing models.

Clear cost breakdowns help teams track spending and avoid unexpected charges. Visibility into how return on investment is measured further strengthens the evaluation of an AI development company.

To support faster comparison, the following factors help clarify cost expectations:

Cost Transparency:

| Aspect | What to Check | Why It Matters |

|---|---|---|

| Pricing Structure Clarity | Fixed, subscription-based, or usage-based pricing | Clarifies how costs are calculated and avoids surprises |

| Cost Predictability and Budget Control | Detailed estimates and expense tracking | Supports budget discipline and reduces financial risk |

| Visibility into AI Project ROI | Measurement of business value delivered | Helps assess financial impact and long-term value |

Pricing clarity alone is not enough. The engagement model also plays a significant role in day-to-day collaboration and long-term outcomes.

Some vendors provide dedicated teams, while others follow hybrid or project-based approaches. The right model depends on project scope, internal capabilities, and long-term goals.

Flexibility matters here. Vendors that allow scope adjustments without hidden costs support smoother execution and stronger working relationships.

Long-term partnership readiness should also be evaluated. Vendors capable of scaling support as AI initiatives grow offer continuity and sustained value over time.

6. Long-Term Scalability and Partnership Readiness

The final evaluation step looks ahead. AI initiatives rarely remain static, and vendor readiness for growth affects long-term outcomes.

Scalability assessment should cover the vendor’s ability to support increasing data volumes, more complex models, and expanding workloads. Infrastructure planning across cloud or on-prem environments plays a key role in maintaining consistent performance.

Partnership mindset also matters. Vendors prepared to grow alongside your organization offer more than technical support. They contribute strategic alignment, continuous improvement, and sustained value. This quality often defines a successful AI implementation partner.

Each evaluation area builds on the previous one. Technical capability establishes feasibility. Architecture ensures stability. Security protects trust. Operations maintain reliability. Cost clarity supports collaboration. Scalability secures the future.

When these elements are assessed together, organizations gain a clear framework for choosing an AI development partner with confidence. This structured approach transforms vendor selection into a strategic decision rather than a reactive choice.

How Can an AI Vendor Evaluation Scorecard Help You Choose the Right Partner?

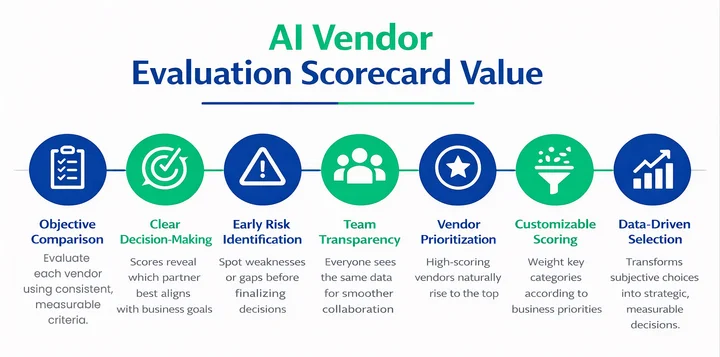

An AI vendor evaluation checklist gives structure, but a scorecard takes it a step further. It turns observations into measurable data, making the selection of an AI development partner clear and objective.

The scorecard works like a simple rating system. Each vendor is scored against key factors, producing a total score that highlights the best fit. This method reduces guesswork and helps teams compare vendors fairly.

Here’s how a scorecard adds value:

- Objective comparison: Instead of relying on impressions, each vendor is evaluated with consistent metrics.

- Clarity in decision-making: Scores make it easy to see which vendor aligns best with business goals.

- Quick identification of gaps: Weak areas or risks become visible early, allowing informed discussions.

- Transparency across teams: Everyone involved sees the same data, making collaboration smoother.

- Vendor prioritization: High-scoring vendors naturally rise to the top, simplifying next steps.

The process is simple. Assign a score to each checklist category, sum the scores, and review the rankings. Scores can be numeric or color-coded for easy interpretation. Teams can even weigh certain categories more heavily if some aspects matter more for the business.

A well-structured AI vendor evaluation scorecard ensures that AI development company evaluations are consistent, objective, and aligned with strategic goals.

It also strengthens AI technology partner evaluation, AI consulting company selection, and AI implementation partner decisions.

Using a scorecard transforms vendor selection from a subjective choice into a strategic, data-driven decision, giving confidence that the chosen partner will deliver measurable results.

All Set to Choose AI Vendors with Confidence?

- Quickly evaluate vendors across technical, architectural, security, DevOps, cost, and scalability criteria.

- Make informed and confident decisions backed by structured analysis.

- Save time and reduce risk by consolidating all evaluation factors into a single, actionable checklist.

How Can Clustox Help You Choose the Right AI Development Partner?

It can be challenging to pick the best AI development partner. Clustox simplifies the process and provides clear guidance at every step. Their approach focuses on aligning AI solutions with your business goals while reducing risk.

Here is how Clustox helps organizations make confident decisions:

- Expert Guidance Across AI Domains: Teams at Clustox have hands-on experience in machine learning, natural language processing, computer vision, and predictive analytics. This helps you understand what solutions are feasible and which vendors can deliver results.

- Structured Assessment Support: Clustox guides you through tools like the AI vendor evaluation scorecard. This makes it easy to compare vendors objectively and identify the best fit.

- Integration and Scalability Planning: Solutions are designed to connect smoothly with existing systems. Clustox also ensures they can grow alongside your business needs.

- Security and Compliance Assurance: Clustox prioritizes data protection and regulatory compliance. Their knowledge of GDPR, HIPAA, and industry standards reduces operational and legal risk.

- Operational Reliability: Mature DevOps and MLOps practices maintain model performance over time. Clustox helps vendors implement workflows that reduce errors and keep AI solutions running efficiently.

- Transparent Collaboration: Clear engagement models and transparent pricing enable teams to plan budgets accurately and avoid surprises.

Together, these capabilities help organizations conduct effective AI vendor assessments, strengthen AI technology partner evaluations, and select an AI implementation partner that delivers measurable outcomes.

Ultimately, Clustox does not just provide technical expertise. They guide teams through the decision-making process, making AI initiatives more predictable, efficient, and successful.

Conclusion

Evaluating an AI vendor requires careful attention to technical capabilities, architecture, security, DevOps maturity, cost transparency, and long-term scalability. Understanding these factors early helps prevent wasted resources and ensures AI initiatives deliver measurable business value.

Using structured evaluation methods brings clarity and confidence to decision-making, while tools like the AI Vendor Evaluation Scorecard allow you to objectively compare vendors and identify the partner best suited to your business needs.

If operational efficiency and smooth AI deployment are key concerns, using expert Cloud & DevOps services can help ensure that AI solutions integrate seamlessly, scale reliably, and maintain performance over time. Applying this checklist alongside proven operational practices simplifies complex decisions and increases the likelihood of successful AI project outcomes.

Take the next step to assess vendors efficiently and make confident, strategic decisions for your AI projects.

Frequently Asked Questions (FAQs)

2. What are the Essential Questions to Ask an AI Development Partner?

Yes, asking the right questions helps avoid risks:

- Can you demonstrate successful projects similar to ours?

- How do you maintain model accuracy and reliability?

- What security and compliance measures are in place?

- How do you manage ongoing updates and monitoring of AI models?

3. How Do You Assess Security and Compliance of an AI Vendor?

Security and compliance are critical for protecting sensitive data. Ensure the vendor follows:

- Data protection protocols: encryption, access control, secure storage

- Regulatory compliance: GDPR, HIPAA, or industry-specific rules

- Governance practices: monitoring, auditing, and accountability policies

Vendors strong in these areas reduce operational and legal risks.

4. What Should You Look for in Cost Transparency and Engagement Models?

Yes, unclear pricing can derail AI projects. Look for:

- Transparent pricing structure (fixed, subscription, or usage-based)

- Predictable budgets and ROI visibility

- Flexible engagement models to accommodate project changes

These factors ensure smoother collaboration and financial clarity.

5. How Can You Evaluate a Vendor’s Scalability for Long-Term AI Projects?

Assess whether the vendor can handle growing workloads and scale infrastructure without performance drops. Additionally, consider their ability to support long-term collaboration and evolving business needs. A scalable vendor ensures AI projects remain efficient and sustainable.

6. Why Should You Use an AI Vendor Evaluation Scorecard?

The scorecard consolidates all evaluation criteria into a single structured framework. Furthermore, it helps compare vendors objectively, highlights strengths and gaps, and increases confidence in your decision-making. Using this tool reduces risks and improves the chances of a successful AI project.

Will your AI project deliver measurable results, or risk joining the 80% of initiatives that fail due to weak vendor evaluation?

Share your thoughts about this blog!