CTOs must know that secure AI deployment means controlling what AI agents can access, process, and act upon.

Because businesses are rapidly moving from experimenting with AI models to deploying autonomous AI agents. These AI agents help them to make decisions, access sensitive data, and execute workflows across critical systems.

They are combining generative intelligence with real-world execution to enhance capabilities such as customer service, analytics, and operational automation.

IBM reported that the global average cost of a data breach reached $4.45 million. The report also highlights the financial impact of inadequate data governance and security controls in digital systems.

However, this new capability introduces unique AI agent security and compliance challenges. They are not a regular or traditional framework, these agents work across many layers, including models, APIs, data stores, and workflows. But this efficiency creates more risks that standard security controls cannot fully cover.

Security teams must evaluate not only network and application protections but also how agents interpret context, handle sensitive data, and perform actions autonomously. Without structured governance and monitoring, enterprises risk data exposure, regulatory violations, and operational vulnerabilities.

This guide is a help to get started with a practical, structured way to understand AI agent risks for secure deployment.

| TLDR; |

|---|

| AI agents in 2026 act independently; they access data, use tools, make decisions, update systems, and run complex workflows on their own. |

| Use of AI agents creates new risks: prompt injection, sensitive data leaking through RAG, agents misusing tools or credentials, unpredictable outputs, and behavior changes after updates. |

| You can’t secure just the model; you must protect the full chain, agent identity, tool permissions, data access, every action, and reasoning steps. |

| RAG makes agents smarter but dangerous, locks down vector databases, logs every retrieval, encrypts data, and checks outputs before any action happens. |

| Regulations demand more in 2026: explainable decisions, human review for high-risk actions, full audit logs, memory controls (GDPR/HIPAA), and stop buttons when needed (EU AI Act). |

| Observability is essential track prompts, retrievals, tool calls, and outputs in real time so you can spot problems, prove compliance, and catch drift early. |

| Governance basics you need: clear roles, version control for prompts and models, human checkpoints for important decisions, and regular risk checks. |

| Deploy safely with these steps: map your setup, identify risks, add controls at every layer, monitor continuously, and test aggressively. |

| Reach out to Clustox; we handle secure private hosting, safe RAG, governance, monitoring, and compliance so you can deploy agents with confidence. |

Why Is AI Agent Security a Different Security Problem?

AI agent security is fundamentally different from traditional SaaS security because AI agents are autonomous, context-aware, and capable of interacting with multiple systems in real time.

Table of Contents

Traditional SaaS applications follow deterministic workflows: users trigger predefined actions, and outputs are predictable.

AI agents interpret context, generate reasoning steps, and make decisions dynamically. This behavior introduces new security risks and expands the attack surface in ways that legacy security models were not designed to handle.

Traditional SaaS vs Autonomous AI Agents

The distinction between conventional SaaS and autonomous AI agent development security is critical for understanding risk:

- Predictability: SaaS workflows are static; AI agents act on probabilistic outputs.

- Access Scope: SaaS users operate within defined permissions; AI agents may access multiple systems and tools.

- Decision Autonomy: SaaS executes rules; AI agents decide which actions to take based on context.

- Data Exposure: SaaS stores user input; AI agents process, store, and retrieve data dynamically, often combining multiple sources.

This difference means security and governance strategies for AI agents require adaptive monitoring, behavioral analysis, and robust lifecycle governance.

Rise of Multi-Agent Systems

Enterprises use multi-agent frameworks such as LangChain, CrewAI, and AutoGen to coordinate complex workflows. In these systems, multiple AI agents exchange context, trigger actions, and interact with shared data stores. While this enhances productivity, it introduces new risks.

LLM Security Risks in Production

Deploying LLMs in production introduces unique security concerns:

- Prompt injection attacks: Malicious input overrides system instructions.

- Model poisoning: Corrupt training or retrieval data changes model behavior.

- Output variability: Non-deterministic outputs complicate validation and testing.

- External data leakage: Agents querying RAG systems or vector databases may inadvertently expose sensitive information.

These LLM security risks require continuous logging, monitoring, and automated anomaly detection to ensure safe operation.

AI SaaS and ChatGPT Production Risks

Using third-party AI SaaS, including ChatGPT, introduces additional considerations. CTOs must evaluate AI SaaS security risks as part of enterprise AI security planning, particularly for high-sensitivity workloads.

Agent Autonomy and External Tool Access

AI agent security often operates with external tool access. This autonomy allows agents to query databases, update CRM systems, and trigger workflows. It increases the exposure of every tool that the agent can access, making it a potential attack vector. Security architecture must define strict boundaries and continuously monitor agent activity.

Expanded Attack Surface in RAG Architecture

Retrieval-Augmented Generation (RAG) architecture enhances AI outputs by connecting LLMs to external knowledge sources, including vector databases. Mitigation strategies include enforcing access control on vector stores, logging all retrievals, and validating output content before downstream use.

AI Lifecycle Governance Challenges

AI agents evolve continuously. Model updates, prompt refinements, and orchestration changes can introduce new risks over time. AI lifecycle governance must cover:

- Risk assessments

- Validation of new prompts

- Continuous monitoring

- Audit trails for actions performed

- Integration with compliance requirements for regulations like GDPR, HIPAA, and the EU AI Act

Without robust governance, even small updates can create gaps in security, compliance, and operational reliability.

Secure AI deployment needs clear AI agent architecture layering:

What are the Core Components of Enterprise AI Agent Architecture?

Before addressing risk, CTOs should map the AI system architecture clearly. AI agents typically operate within layered components:

| Component | Operate Details |

|---|---|

| Foundation Model Layer | Provides the necessary compute (GPUs/TPUs), storage, vector databases, networking, and security frameworks to run the agents. Examples include OpenAI models, Anthropic Claude, Google Gemini, Microsoft Azure OpenAI services, and AWS Bedrock-hosted models. |

| Orchestration Layer | Contains the core LLMs or specialized models used for inference, reasoning, and generating responses. Tools like LangChain manage prompt flows, memory, tool calls, and agent reasoning steps. |

| Retrieval Layer | RAG architecture then connects LLMs to a vector database storing embeddings of enterprise documents. |

| Application Layer | Business workflows integrate AI outputs into CRM, ERP, ticketing, or analytics systems. |

| Infrastructure Layer | Cloud or on-prem AI deployment environments run containers, APIs, logging systems, and security controls. |

Key Additions Often Mentioned in 2026 Architectures:

Governance/Security Layer: Crucial for safety, monitoring compliance, and managing data permissions (e.g., in the Salesforce AI stack).

Action/Tooling Layer: Specialized tools that allow agents to execute tasks in external applications (APIs, CRM, ERP).

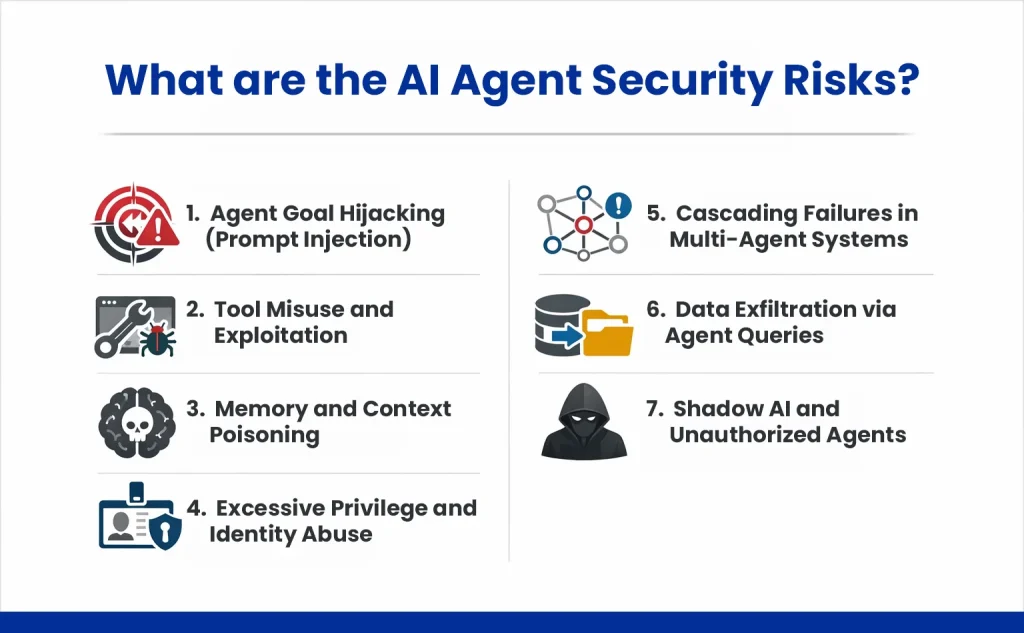

When are the AI Agent Security Risks?

AI agents powered by LLMs can access, process, and even store sensitive information during normal operations. Mismanaging these capabilities can lead to serious compliance violations, intellectual property exposure, and operational risks.

AI agent Security depends on how trust and identity function in enterprise systems.

In a conventional system, user identity drives permissions. With AI agents, an autonomous system may hold its own credentials and execute actions across platforms.

This creates new considerations:

- Agent identity management

- Credential rotation policies

- API rate limiting

- Access scope restriction

- Tool execution validation

Zero-trust architecture becomes especially relevant. Each agent action should require validation, even within internal networks.

Kubernetes AI deployment environments require network segmentation, pod security policies, and container runtime scanning. AI infrastructure security should align with existing DevSecOps practices.

When using cloud providers or third-party model services, review their compliance posture carefully. If the organization uses platforms such as OpenAI, ChatGPT Enterprise, Google Gemini, Microsoft Azure OpenAI, or AWS Bedrock, verify how they manage data isolation and logging.

How AI Agent Behavior Creates Regulatory Risk in 2026

Autonomous AI agents expand regulatory exposure in ways traditional software does not. Unlike static systems, AI agents can generate derived data, persist contextual memory, access multiple tools, and execute decisions without real-time human oversight.

For CTOs, compliance in 2026 is no longer just about securing databases. It requires governing how AI agents reason, retrieve information, and act across enterprise systems.

EU AI Act

The EU AI Act classifies that AI agents may qualify as high-risk systems when they influence financial, healthcare, employment, or eligibility decisions. The Act requires documented risk management, transparency, and meaningful human oversight.

CTO focus: Ensure agent decisions are explainable, logged, version-controlled, and interruptible through human-in-the-loop checkpoints.

GDPR (General Data Protection Regulation)

AI agents can infer new personal data, retrieve it through RAG systems, or retain it in memory layers. This creates exposure even if the user did not explicitly submit personal data in the current session.

CTO focus: Control memory retention, enforce retrieval logging, enable data deletion workflows, and minimize storage of intermediate reasoning artifacts.

Health Insurance Portability and Accountability Act of 1996 (HIPAA)

For enterprises in healthcare or handling protected health information (PHI), AI agents must comply with HIPAA. Autonomous summarization without review can introduce compliance or clinical risk.

CTO focus: Encrypt PHI, maintain audit trails for agent outputs, and require human review for high-impact medical workflows.

PCI-DSS (Payment Card Data)

Enterprises handling credit card information must align AI agents with PCI-DSS requirements: AI agents integrated with billing systems may access or log payment-related data through APIs, prompts, or memory layers.

CTO focus: Use scoped API keys, mask sensitive fields in prompts, encrypt data in transit and at rest, and monitor agent-to-payment interactions in real time.

SOC 2 and ISO 27001

AI agents introduce non-deterministic outputs and evolving behavior, complicating traditional control validation.

CTO focus: Implement drift detection, structured logging of agent actions, and formal change management for prompts and models.

Don’t let security risks derail your AI initiatives. Proactively address prompt injection, data leakage, and compliance challenges with a structured security strategy.

How Should Founders Structure an AI Governance Strategy?

Autonomous AI agent development and security operate across data, infrastructure, and workflows, making traditional IT oversight insufficient.

Governance ensures that AI agents behave predictably, respect regulations, and deliver value without introducing unacceptable risks. Below are some key areas you can focus on to structure:

| Governance Area | Key Actions | for CTOs | Tools / Practices |

|---|---|---|---|

| AI Lifecycle Oversight | Monitor all stages: data collection, training, deployment, updates | Visibility into risk at every stage ensures consistency | Version control, pipeline tracking, CI/CD monitoring |

| Roles & Responsibilities | Define ownership for design, deployment, monitoring, and compliance | Clear accountability reduces operational gaps | RACI charts, team alignment |

| Human-in-the-Loop (HITL) | Review critical outputs and high-risk decisions | Reduces errors, increases trust in AI outputs | Approval workflows, manual validation checkpoints |

| Explainable AI (XAI) | Track model reasoning and outputs | Transparency for audits, regulatory compliance | Model interpretability tools, output logging |

| Model Monitoring & Drift Detection | Detect shifts in output patterns and agent behavior | Prevents unreliable or unsafe outputs | Drift detection algorithms, anomaly monitoring |

| Responsible AI Practices | Ensure fairness, transparency, privacy-by-design | Reduces ethical and reputational risks | Bias testing, privacy compliance checks |

| Compliance Automation & Audit Trails | Automate policy checks, maintain tamper-proof logs | Simplifies audits, ensures regulatory adherence | Logging frameworks, automated compliance checks |

| Data Governance & Lineage | Track data sources, movements, and usage | Supports accountability and explains outputs | Data cataloging, lineage tracking systems |

How do you architect secure AI deployment at scale?

AI systems operate across multiple layers, models, workflows, data stores, and external tools, so architects must design security into every component.

First, isolate the AI agent security and environment. Whether using private LLM hosting or on-prem AI deployment, restrict network exposure.

Second, secure the RAG architecture. Control document ingestion processes. Monitor vector database access patterns.

Third, implement AI observability. AI logging and monitoring systems should capture:

- Prompt metadata

- Retrieval sources

- Tool calls

- Output classifications

Fourth, enforce data encryption at rest across storage systems.

The following table outlines architectural controls aligned with deployment layers.

| Architecture Layer | Security Focus | Example Controls |

|---|---|---|

| Model Layer | Isolation and vendor review | Private hosting, contract review |

| Orchestration Layer | Prompt control | Input validation, system prompt isolation |

| Retrieval Layer | Data access control | Encrypted vector database, RBAC |

| Application Layer | Action validation | Approval workflows, human review |

| Infrastructure Layer | Network security | Zero-trust architecture, segmentation |

How do you prevent prompt injection and LLM exploitation?

Autonomous AI agents powered by LLMs face unique threats such as prompt injection and model exploitation. These attacks can override instructions, manipulate outputs, or leak sensitive data if not mitigated. A structured approach is critical for technical officers to maintain secure AI deployment.

Prompt Injection Mitigation

Validate and sanitize all inputs to AI agents. Limit system instructions that can be influenced by external prompts. Consider context isolation to prevent cross-contamination between user queries and internal instructions.

Model Poisoning Detection

Continuously monitor model outputs for anomalies that could indicate poisoned data during fine-tuning or embedding updates. Implement automated checks for unusual reasoning patterns or unexpected behavior.

Context Window Isolation & Secure RAG Design

Segregate memory and retrieval contexts. Ensure that vector databases used in RAG architectures enforce strict role-based access, encryption, and query monitoring.

Adversarial Red Teaming

Simulate attacks to proactively uncover vulnerabilities in AI reasoning or retrieval flows.

AI Content Hygiene & Optimization

Protect LLM indexing signals, structured AI content, and AI citation optimization workflows to prevent malicious content from affecting model outputs.

By combining input validation, monitoring, secure RAG design, and adversarial testing, enterprises can significantly reduce the risk of prompt injection and LLM exploitation.

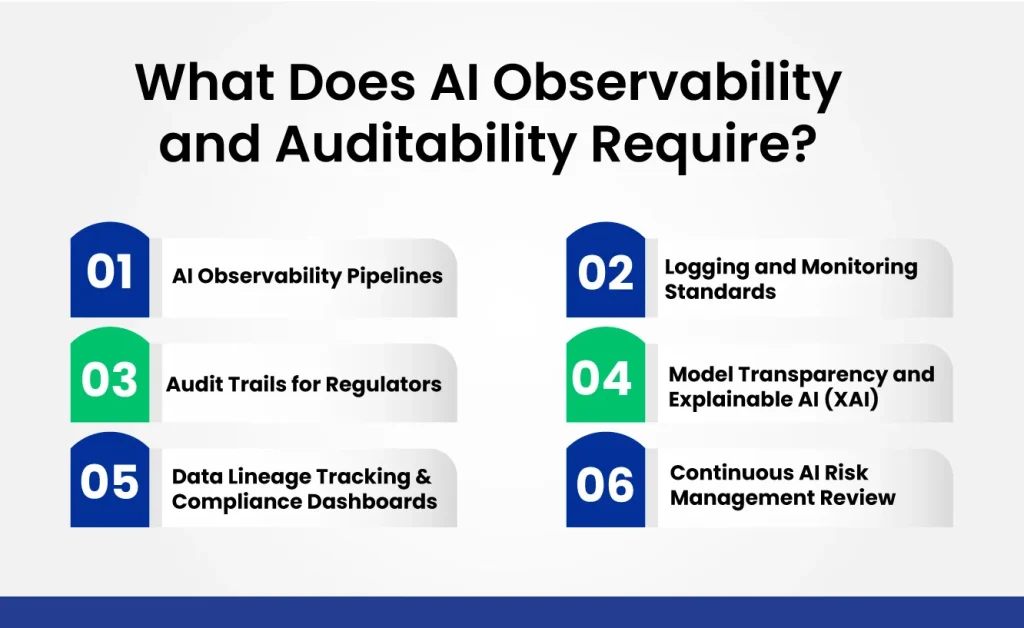

What Does AI Observability and Auditability Require?

For enterprises, deploying AI agents without observability and auditability is a blind spot waiting for a risk to surface. You can ensure that every AI system can be monitored, explained, and traced to maintain operational safety, regulatory compliance, and stakeholder trust.

AI Observability Pipelines

Build pipelines that continuously track agent behavior, model outputs, data access, and workflow executions. Observability provides real-time insights into anomalies, unusual activity, or potential errors before they escalate.

Logging and Monitoring Standards

Implement structured AI logging for all agent actions, including API calls, tool invocations, and data interactions. Standardized monitoring allows rapid detection of performance issues, security incidents, or policy violations.

Audit Trails for Regulators

Maintain tamper-proof records detailing agent decisions, data retrieval, and workflow triggers. Audit trails demonstrate accountability and compliance with frameworks.

Model Transparency and Explainable AI (XAI)

Capture reasoning paths and outputs to explain agent decisions to auditors, business stakeholders, or end users. Transparency is essential for trust and regulatory alignment.

Data Lineage Tracking & Compliance Dashboards

Track the origin, movement, and transformation of all data used by AI agents. Dashboards provide CTOs with a single view of compliance, risk, and operational performance.

Continuous AI Risk Management Reviews

Regularly update observability strategies to account for model drift, new workflows, or regulatory changes. Observability and auditability are ongoing disciplines, not one-time setups.

By integrating pipelines, monitoring, XAI, lineage tracking, and continuous reviews, enterprises achieve full visibility into AI operations while maintaining regulatory defensibility.

How can Enterprises Future-proof AI Regulatory Compliance?

Enterprise must proactively prepare to ensure autonomous AI agents remain secure, auditable, and aligned with global standards in 2026 and beyond.

AI Regulatory Compliance 2026 Preparation

Map AI systems to applicable regulations. Identify high-risk workflows and document control measures before deployment.

AI Compliance Automation

Implement automated checks to validate data handling, model outputs, and agent actions against enterprise policies. Automation reduces human error and ensures continuous adherence to regulatory standards.

Structured AI Content for Audit Readiness

Maintain structured, entity-based documentation of AI models, prompts, and retrieval workflows. This makes audits efficient and defensible.

Generative Engine Optimization (GEO) & Visibility Strategies

Optimize AI outputs with traceable references and semantic structuring. Use AI citation optimization and answer engine optimization to create outputs that are explainable, verifiable, and compliant.

Enterprise Knowledge Base Integration

Align AI documentation with internal knowledge repositories, ensuring that content, indexing, and retrieval strategies support both operational efficiency and regulatory defensibility.

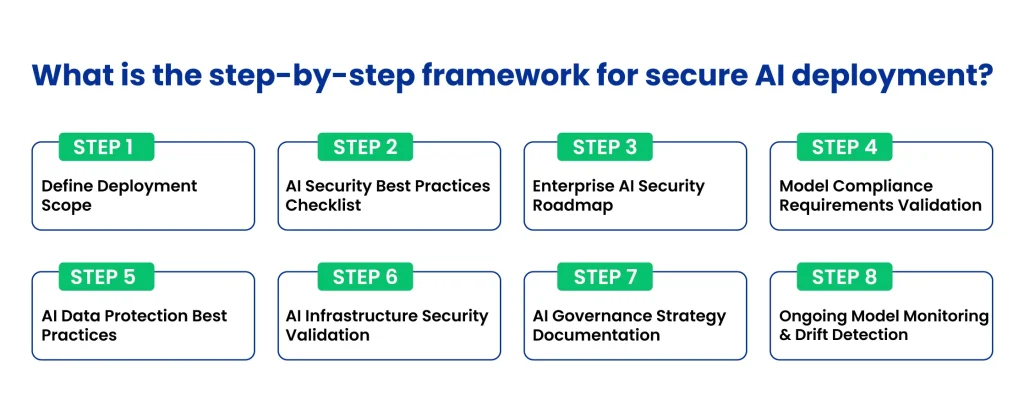

What is the step-by-step framework for secure AI deployment?

Deploying AI agents securely at scale requires a structured, step-by-step framework that addresses both technical and compliance considerations. CTOs must combine practices, governance, and ongoing monitoring to reduce risk and ensure operational reliability.

- Define Deployment Scope: Identify which AI agents, workflows, and data sources will be part of the deployment. Classify high-risk processes and sensitive datasets.

- AI Security Best Practices Checklist: Implement least-privilege access, zero-trust architecture, API rate limiting, and data encryption at rest and in transit. Limit agent autonomy to only necessary actions.

- Enterprise AI Security Roadmap: Create a timeline for secure deployment, including infrastructure hardening, model validation, and governance implementation. Align teams across engineering, security, and compliance.

- Model Compliance Requirements Validation: Ensure models meet regulatory and internal policies before deployment. Include data retention, privacy, and audit requirements.

- AI Data Privacy Risks: Protect sensitive data through encryption, access controls, and vector database safeguards. Fine-tuning pipelines should prevent proprietary data leakage.

- AI Infrastructure Security Validation: Confirm cloud or on-prem deployments follow security standards, including private LLM hosting, Kubernetes best practices, and network isolation.

- AI Governance Strategy Documentation: Record roles, responsibilities, monitoring protocols, and escalation procedures. Include human-in-the-loop checkpoints for high-risk actions.

- Ongoing Model Monitoring & Drift Detection: Continuously track agent outputs, behavior, and context use. Detect anomalies, model drift, or unauthorized access, and update controls accordingly.

Secure Your Enterprise AI with Clustox

Clustox partners with enterprises to design, implement, and monitor AI systems that are robust, auditable, and aligned with global regulatory standards. From private LLM hosting and secure RAG architectures to AI lifecycle governance and observability, Clustox ensures your AI deployments are safe, scalable, and compliant.

Want help building this the right way?

Get in touch with Clustox to accelerate secure AI adoption and reduce operational risk while unlocking the full potential of autonomous AI agents across your enterprise workflows.

FAQs

What is AI agent security?

AI agent security is all about keeping those smart, independent AI agents safe, the ones that don’t just chat but actually access your data, use tools, make decisions, and run real workflows on their own. It’s way more than model protection; it’s controlling their access, memory, actions, and reasoning so they don’t leak info, get tricked, or break rules.

How to secure AI agents?

To secure AI agents properly, give limited credentials that rotate often, use zero-trust for every action, harden RAG with strict access and logging, block prompt injection with input checks, log everything (prompts, tools, outputs), add human review for risky stuff, and watch constantly for weird behavior or drift.

Is ChatGPT secure for enterprise?

Not fully out of the box for sensitive work. ChatGPT (or similar SaaS) can leak data if you prompt carelessly, and you don’t control memory or RAG deeply. Use enterprise versions with better isolation, mask sensitive info, monitor calls, and prefer private hosting for anything critical. Third-party SaaS adds extra risk you can’t fully eliminate.

How to comply with GDPR when using AI?

Keep personal data under tight control: limit how long agents remember things, log every retrieval, delete data when asked, isolate sessions, avoid inferring new personal info accidentally, and build in deletion workflows. Privacy-by-design and regular checks keep you GDPR-safe, especially with RAG pulling enterprise docs.

AI compliance checklist

Quick checklist for 2026:

- Classify high-risk agents/workflows

- Map to regs (EU AI Act, GDPR, HIPAA, PCI-DSS, SOC 2)

- Log decisions, retrievals, reasoning

- Add human oversight for big calls

- Track data lineage and encryption

- Monitor drift and automate policy checks

- Keep versioned prompts/models and audit trails

AI audit requirements

Audits want clear, tamper-proof records: full traces of prompts

- Retrievals

- Reasoning

- Actions

- Outputs

Make reasoning explainable, feed logs to SIEM, show data lineage, and have dashboards proving compliance (EU AI Act loves transparency, GDPR needs deletion proof, HIPAA wants PHI trails).

AI risk assessment process

Map your whole setup (models, orchestration, RAG, tools, infra). Spot high-risk spots (sensitive data, autonomous decisions). List threats (injection, leaks, drift). Classify everything, test with red-teaming, apply fixes layer by layer, document it, and re-check every time you update prompts or models.

What is an AI governance framework?

Use this simple 8-step path:

- Define what agents, workflows, and data you’re using and flag risks

- Apply least-privilege, zero-trust, encryption, and limited powers

- Make a team roadmap with clear timelines

- Check models meet compliance rules first

- Encrypt data, lock vector DBs, stop fine-tune leaks

- Harden hosting (private LLM, isolated networks)

- Document roles, monitoring, and human reviews

- Watch outputs and behavior forever catch drift fast

AI agent deployment is complex. Ensure your systems are secure, compliant, and scalable from day one.

Share your thoughts about this blog!