The use of artificial intelligence in actual business operations is quickly growing. Companies build smarter products, automate decisions, and analyze massive datasets using AI systems.

Yet a critical question emerges: how does an idea actually move through the AI development lifecycle into a production system?

Many teams struggle at this stage. Industry research shows that 88% of AI pilots never reach full production deployment, mainly due to weak data readiness, unclear objectives, and infrastructure challenges. This gap highlights how complex the AI development process can be when organizations attempt to move experiments into real applications.

A structured AI model development lifecycle helps teams move step-by-step, from data preparation to AI system deployment. Furthermore, a clear AI deployment strategy process connects model training, infrastructure, and monitoring into a stable pipeline.

Curious how companies move AI from idea to production successfully?

In this blog, you’ll learn the stages, tools, and systems that shape modern AI development.

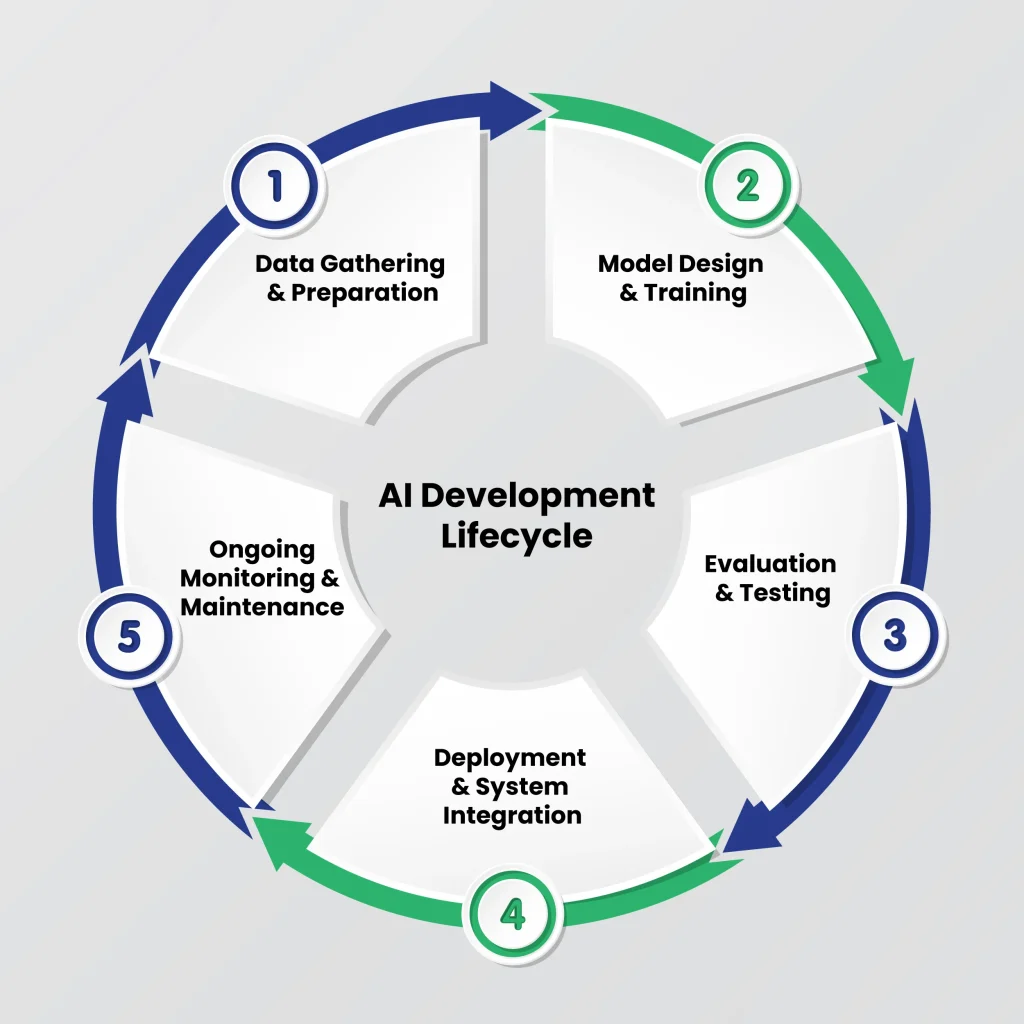

How Does the AI Development Lifecycle Work, and What are the Stages of the AI Development Lifecycle?

The AI development lifecycle is a structured roadmap that guides AI projects from the initial idea to production deployment. It helps businesses turn concepts into reliable, scalable AI solutions while reducing errors and inefficiencies.

Each step, including problem definition, AI model training and testing, and maintenance, is carefully planned to improve project success.

Table of Contents

Benefits of Following an AI Development Lifecycle Include:

- Improved project efficiency: Clear steps prevent wasted effort and reduce delays.

- Higher model accuracy: Structured workflows ensure quality data, proper training, and rigorous evaluation.

- Better alignment with business goals: Each stage incorporates business objectives, making the AI solution practical and useful.

- Scalability and maintainability: Deployed models are easier to update and adapt to changing data or business needs.

These advantages show why following the AI lifecycle is crucial for successful AI projects. Understanding each stage is key to building reliable AI solutions.

The following sections break down the stages of the AI development lifecycle and explain what happens at each step.

Stage 01: Data Collection and Preparation

With a clear problem in mind, the first step in building an AI solution is to collect and prepare the right data. This stage forms the foundation of the AI development lifecycle.

The quality, relevance, and accuracy of your data directly affect how well your AI or machine learning model performs. Poor data can lead to inaccurate predictions, model bias, or project delays.

Essential Steps in Data Collection and Preparation Include:

- Data Collection: Gather data from multiple sources, including internal databases, public datasets, APIs, and third-party providers. Ensure the data is relevant to the AI project goals.

- Data Cleaning: Remove duplicates, correct errors, and handle missing values to maintain data quality and integrity.

- Data Labeling: Annotate data accurately for supervised learning tasks. Proper labeling ensures the AI model can recognize patterns correctly.

- Data Formatting and Normalization: Standardize data formats, scales, and structures so the dataset is consistent and ready for training.

- Compliance and Security: Ensure sensitive data is protected, and the dataset complies with applicable industry regulations, such as GDPR or HIPAA.

Well-prepared data improves AI model accuracy and overall performance, reduces errors during training and evaluation, and accelerates and streamlines machine learning model development.

Clean, structured data also enables easier integration with AI tools, frameworks, and platforms, supporting scalability and long-term project success.

Careful preparation of data ensures a strong foundation for the next stage, which focuses on model selection and design, where this organized data is used to train effective, scalable AI models.

Stage 02: Model Development and Training

Once the data is clean, labeled, and prepared, the next step in the AI development lifecycle is model development and training. This stage focuses on selecting the right algorithms, designing the model architecture, and teaching the AI system to recognize patterns from the data.

The goal is to create a model that can make accurate predictions or decisions based on the data it receives.

The Following are the Steps Involved in Model Development and Training:

- Algorithm Selection: Choose the most suitable machine learning or AI algorithm based on the problem type, data characteristics, and desired outcomes.

- Model Design: Define the model’s architecture, considering factors such as complexity, scalability, and performance requirements.

- Training the Model: Use the prepared datasets to train the model and adjust it to learn patterns effectively.

- Evaluation and Validation: Test the model using separate validation data to measure accuracy, precision, and recall.

- Iteration and Tuning: Refine the model by adjusting parameters and retraining until it reaches optimal performance.

During this stage, collaboration with experts in software development services can be crucial. Partnering with skilled teams ensures the model is implemented efficiently, integrates effortlessly with your systems, and meets project requirements.

This also helps in applying best practices for code quality, architecture, and scalability. Proper model development and training set the stage for deployment and integration, where the trained model is moved into a production environment to start delivering real-world results.

Stage 03: Testing and Validation

After developing and training the AI model, the next critical step in the AI development lifecycle is testing and validation. This stage ensures that the model performs as expected and delivers accurate, reliable results when applied to real-world data.

Proper testing identifies weaknesses, prevents errors, and improves overall system quality before deployment.

Essential Procedures for Validation and Testing are as follows:

- Model Evaluation: Assess the model’s performance using various metrics such as accuracy, precision, recall, and F1 score.

- Validation with Real Data: Test the model on unseen or real-world data to ensure it generalizes well beyond the training set.

- Stress and Scenario Testing: Check how the model behaves under extreme or unusual conditions.

- Error Analysis and Correction: Identify areas where the model is underperforming and make necessary adjustments.

- Documentation: Record testing results, assumptions, and limitations for transparency and future reference.

Collaborating with experts in software quality assurance and testing services can significantly enhance this stage. Professional QA teams help implement rigorous testing procedures, catch potential issues early, and ensure that the AI model meets both performance and compliance standards.

Thorough testing and validation prepare the AI model for the next stage: deployment and integration, where it can be effectively applied to real business environments and deliver measurable results.

Stage 04: Deployment & Integration

Once the AI model has been developed, trained, and thoroughly tested, it moves into the deployment and integration stage. This step brings the model from a controlled environment into real-world applications, where it can start delivering insights and supporting business decisions.

Proper deployment ensures the model operates efficiently, scales with demand, and integrates smoothly with existing systems.

Steps in Deployment and Integration Include:

- Deployment Strategy: Decide whether to deploy the model on-premises, in the cloud, or in a hybrid environment based on business requirements and infrastructure.

- System Integration: Connect the AI model to existing applications, databases, and software tools to ensure complete functionality.

- Monitoring in Production: Continuously track model performance, response times, and system health to detect issues early.

- User Training and Adoption: Equip teams with the knowledge to effectively use the AI system and interpret its outputs.

- Performance Review: Collect feedback from real-world use to measure impact and identify areas for improvement.

Successful deployment and integration transform the AI model from a theoretical solution into a practical tool that drives measurable results. This stage also lays the groundwork for ongoing maintenance and updates, ensuring the AI system remains effective and reliable over time.

Stage 05: Monitoring and Maintenance

After deployment, the AI model begins operating in a real-world environment. This stage focuses on monitoring performance and maintaining the system to ensure it continues delivering accurate and reliable results.

Data patterns, user behavior, and business requirements often change over time, affecting the model’s performance.

Ongoing AI model monitoring and maintenance ensure solutions remain effective over time. Continuous maintenance also allows teams to improve the model as new data becomes available.

Monitoring and Maintenance Activities Include:

- Performance Monitoring: Track prediction accuracy, response time, and system performance to ensure the model operates as expected.

- Model Drift Detection: Identify changes in data patterns that may degrade the AI model’s accuracy over time.

- Data Updates: Add new and relevant data to keep the model aligned with current trends and user behavior.

- Model Retraining: Retrain the model periodically so it continues producing reliable results.

- System Updates: Maintain infrastructure, libraries, and dependencies to keep the AI system stable and secure.

Ongoing monitoring and maintenance ensure that the AI solution continues to perform effectively long after deployment. Continuous improvements help organizations adapt to evolving data and maintain long-term value from their AI systems.

88% of AI pilots never reach production. Get custox Expert guidance to help your AI models perform accurately, scale efficiently, and produce measurable results.

How Does Clustox Support Every Stage of the AI Development Lifecycle?

Turning an AI idea into a fully functional solution can be challenging. Data issues, model inefficiencies, and deployment hurdles often slow progress. Moreover, businesses need a reliable partner to guide them through each stage of the AI development lifecycle.

Clustox works closely with organizations to ensure concepts transform into practical, production-ready AI systems.

Furthermore, Clustox ensures every step of the stages of AI development is handled with precision:

- Data Collection & Preparation: They ensure datasets are clean, structured, and compliant, providing a solid foundation for accurate AI models.

- Model Development & Training: Clustox helps select the right algorithms, design scalable architectures, and train models efficiently for real-world performance.

- Testing & Validation: Rigorous QA, validation on real data, and scenario testing ensure models are reliable and ready for deployment.

- Deployment & Integration: Clustox streamlines deployment and makes AI model integration automatic with existing systems.

- Monitoring & Maintenance: Continuous monitoring, retraining, and system updates keep AI solutions accurate, effective, and aligned with evolving business needs.

Additionally, by supporting every stage, Clustox helps businesses reduce risks, improve efficiency, and maximize the long-term impact of their AI initiatives.

With their expertise, organizations can confidently move from concept to real-world AI solutions while maintaining scalability and reliability.

Frequently Asked Questions (FAQs)

2. Why Do Many AI Projects Fail Before Production Deployment?

Many AI projects fail before deployment due to poor data quality, unclear objectives, and infrastructure limitations.

In addition, teams often underestimate the effort required to move models from experimentation to production. A structured AI development lifecycle helps reduce these risks and improves the chances of successful deployment.

3. How Long Does It Take To Develop and Deploy an AI Model?

The development time for an AI model varies depending on project complexity, dataset size, and infrastructure readiness. Simple models may take a few weeks, while enterprise AI systems can take several months.

A clear development process helps teams reduce delays and manage the deployment pipeline more efficiently.

4. Can AI Models Improve After Deployment?

Yes. AI models can improve after deployment through continuous monitoring and retraining. As new data becomes available, teams update datasets and retrain models to maintain accuracy. This process also helps detect model drift and ensures that the AI system continues to deliver reliable results.

5. What Tools are Commonly Used in the AI Development Lifecycle?

Several tools support different stages of the AI development lifecycle. For example:

- TensorFlow and PyTorch for model development

- Scikit-learn for machine learning tasks

- Docker and Kubernetes for deployment

- MLflow or Kubeflow for managing ML workflows

These tools help teams build, deploy, and monitor AI systems efficiently.

Final Takeaway

Artificial intelligence development follows a structured path that moves from data preparation to model development, testing, deployment, and ongoing monitoring. Each stage contributes to building reliable, scalable, and effective AI systems that can support real business use cases.

When every phase of the AI development lifecycle is handled carefully, organizations can improve model performance, reduce deployment risks, and adapt their solutions as data and business needs evolve.

For many businesses, managing these stages also requires the right technical strategy and expertise. Working with experienced teams through professional AI consulting services can help organizations plan their AI initiatives, select the right technologies, and implement solutions that align with long-term goals.

With the right approach, companies can move from experimentation to practical AI adoption while maintaining efficiency, scalability, and long-term value.

AI deployments shouldn’t stall. Ensure your models perform reliably, scale smoothly, and deliver maximum results.

Share your thoughts about this blog!